Previously, we have looked at three proposed solutions for the -armed bandit problem: explore-then-commit (ETC), upper confidence bounds (UCB), and Thompson sampling (TS). The regret bounds in the first two algorithms work for general

-sub-Gaussian bandit instances (i.e.

is

-sub-Gaussian for each

), while the latter assumes a Gaussian bandit instance (i.e.

for each

).

We will assume this stricter condition, since Gaussian distributions with variance are, in fact,

-sub-Gaussian. Given this information, and the bandit instance

, which algorithm wins out? We first recall the following regret upper bounds for ETC and UCB:

The ETC strategy, though simple, is a bit too simplistic. Disregarding the fact that our exploration phase directly impacts the regret bound, even if we assumed

to be merely constant (and not growing in

), the right hand side yields an upper bound of the form

, which tends to

as

. The UCB algorithm, on the other hand, yields a long-run regret bound of

, which is constant and hence ideal.

Round 1: UCB wins against ETC.

Where does Thompson sampling come into the picture? Let’s compare their regret bounds:

Trivially, , so that we get the following.

Round 2: Thompson sampling wins against UCB.

Remark 1. The reality is, however, a lot more subtle. It turns out that a clever choice of that depends on

can allow the upper bound for ETC to converge. Furthermore, the UCB algorithm can be modified (Chapter 8 of Lattimore and Szepesvari (2020)) to recover the constant

, and arguably is more extensive than Thompson sampling, since the UCB algorithm works for a wider class of bandits (namely,

–sub-Gaussians rather than just Gaussians with variance

). The UCB algorithm is even more straightforward to generalise from a theoretical perspective, while TS analyses become highly technical—even in the simple case of Gaussians with variance

.

But here’s the problem—how do we know that Thompson sampling or the modified UCB in Remark 2 are really the best algorithms for a bandit instance of Gaussians with variance ? We need to develop the notion of asymptotic optimality. We take inspiration from Chapter 16 of Lattimore and Szepesvari (2020).

Denote and denote the environment of

-armed

-Gaussian bandits by

.

Let denote a risk functional.

Definition 1. A policy (i.e. bandit algorithm) is –consistent over a bandit environment

if for any

and

,

as

.

In particular, UCB and TS are -consistent over

since for any

and

they have constants

(depending only on

) such that

For any policy consistent over the -armed bandit environment

it turns out that we have a very elegant regret lower bound, that even extends to the generalised risk setting. To describe this lower-bound, however, requires us to explore some baby information theory, and in particular, the Kullback-Leibler divergence.

Definition 2 (KL-Divergence). Let denote the Lebesgue measure on

. For probability measures

, if

, define the KL-divergence of

relative to

by

where .

Example 1. Given the Gaussian distributions ,

In the special case ,

Furthermore, if , then

.

Proof. See this page.

Definition 3. Fix . Define

Now let denote a bandit environment.

Example 2. For and

,

where .

Proof. By definition,

By Example 1, given such that

, we have

, so that

Taking ,

Why do we care about the optimised KL-divergence? Because it plays a fundamental role in (asymptotically) lower-bounding the cumulative regret!

Theorem 1. Let be a consistent policy over the

-armed bandit environment

Then for any with unique

-optimal arm

,

Proof. We follow the proof of Theorem 16.2 in Lattimore and Szepesvari (2020). Denote the bandit instance by . Fix

arbitrary. Denote

for brevity. By Definition 3, there exists

such that

and

Define the alternate bandit instance by

for a suitably chosen . We claim that

. By the divergence decomposition lemma in Lattimore and Szepesvari (2020) (Lemma 15.1),

which implies that

Denote and

. Then similar to the writeup,

Performing algebra,

Since is consistent,

and

as

, which yields

, so that

Taking yields the desired result.

Example 3. For any policy that is

-consistent over

, given that

is uniquely optimal, Example 3 yields

Definition 4. Using the notation in Theorem 1, we say that is asymptotically optimal over

if for any

,

Corollary 1. Thompson sampling is asymptotically optimal over .

Proof. By previous discussions,

so that Thompson sampling is -consistent over

. By Example 3,

By Definition 4, Thompson sampling is asymptotically optimal over :

In that sense, Thompson sampling is the ultimate winner! And of course, the modified UCB that achieves the same regret bound is also the ultimate winner over . Yes, Definition 4 admits multiple ultimate winners.

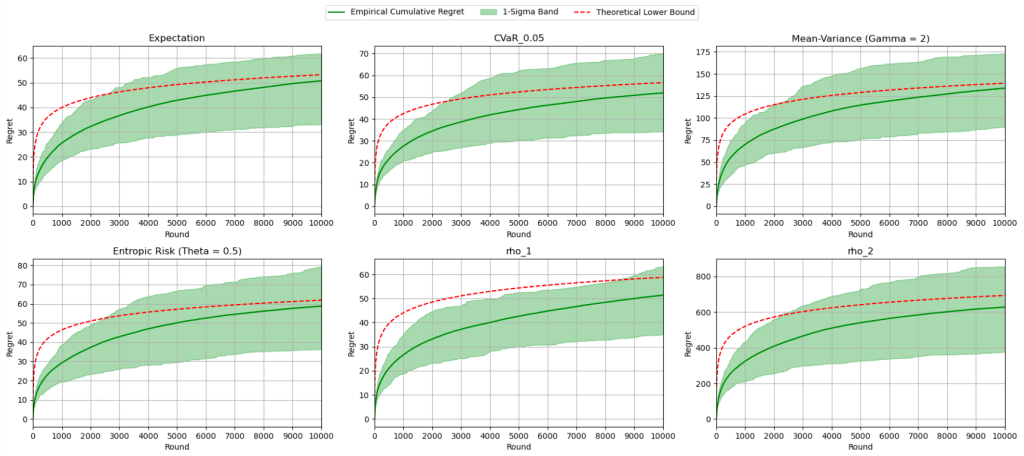

And the discussion is far from over. Denoting , my joint work with my research supervisor shows that

-TS is the ultimate winner over the bandit environment

for many types of risk functionals, including but not limited to the following famous ones:

- Expectation:

,

- Variance:

,

- Conditional value-at-risk:

,

- Mean-variance:

,

- Entropic risk:

,

.

Denoting the collection of probability distributions with support bounded in by

, my ambition was to show that

-TS is the ultimate winner over the bandit environment

. Sadly, it was too ambitious, and is left as a highly non-trivial (possibly impossible?) exercise for the aspiring undergraduate. Perhaps it might work for the various possible

?

, i.e. the collection of

with continuous p.d.f.s.,

, i.e. the collection of

-Gaussian distributions,

, i.e. the collection of

-sub-Gaussian distributions?

I honestly don’t know, and don’t have enough funding in time or money to find out. But I have to say that the nostalgia of revisiting this topic four years later brought me a much-needed closure from those research years.

For now, we are done.

Happy Valentine’s Day!

—Joel Kindiak, 14 Feb 26, 1602H